Use multiple models

The meta for getting the most out of AI in 2026.

I’ll start by explaining my current AI stack and how it’s changed in recent months. For chat, I’m using a mix of:

GPT 5.2 Thinking / Pro: My most frequent AI use is getting information. This is often a detail about a paper I’m remembering, a method I’m verifying for my RLHF Book, or some other niche fact. I know GPT 5.2 can find it if it exists, and I use Thinking for queries that I think are easier and Pro when I want to make sure the answer is right. Particularly GPT Pro has been the indisputable king for research for quite some time — Simon Willison’s coining of it as his “research goblin” still feels right.

I never use GPT 5 without thinking or other OpenAI chat models. Maybe I need to invest more in custom instructions, but the non-thinking models always come across a bit sloppy relative to the competition out there and I quickly churn. I’ve heard gossip that the Thinking and non-Thinking GPT models are even developed by different teams, so it would make sense that they can end up being meaningfully different.

I also rarely use Deep Research from any provider, opting for GPT 5.2 Pro and more specific instructions. In the first half of 2025 I almost exclusively used ChatGPT’s thinking models — Anthropic and Google have done good work to win back some of my attention.Claude 4.5 Opus: Chatting with Claude is where I go for basic code questions, visualizing simple data, and getting richer feedback on my work or decisions. Opus’s tone is particularly refreshing when trying to push the models a bit (in a way that GPT 4.5 used to provide for me, as I was a power user of that model in H1 2025). Claude Opus 4.5 isn’t particularly fast relative to a lot of models out there, but when you’re used to using the GPT Thinking models like me, it feels way faster (even with extended thinking always on, as I do) and sufficient for this type of work.

Gemini 3 Pro: Gemini is for everything else — explaining concepts I know are well covered in the training data (and minor hallucinations are okay, e.g. my former Google rabbit holes), multimodality, and sometimes very long-context capabilities (but GPT 5.2 Thinking took a big step here, so it’s a bit closer). I still open and use the Gemini app regularly, but it’s a bit less locked-in than the other two.

Relative to ChatGPT, sometimes I feel like the search mode of Gemini is a bit off. It could be a product decision with how the information is presented to the user, but GPT’s thorough, repeated search over multiple sources instills a confidence I don’t get from Gemini for recent or research information.Grok 4: I use Grok ~monthly to try and find some piece of AI news or Alpha I recall from browsing X. Grok is likely underrated in terms of its intelligence (particularly Grok 4 was an impressive technical release), but it hasn’t had sticky product or differentiating features for me.

For images I’m using a mix of mostly Nano Banana Pro and sometimes GPT Image 1.5 when Gemini can’t quite get it.

For coding, I’m primarily using Claude Opus 4.5 in Claude Code, but still sometimes find myself needing OpenAI’s Codex or even multi-LLM setups like Amp. Over the holiday break, Claude Opus helped me update all the plots for The ATOM Project, which included substantial processing of our raw data from scraping HuggingFace, perform substantive edits for the RLHF Book (where I felt it was a quite good editor when provided with detailed instructions on what it should do), and other side projects and life organization tasks. I recently published a piece explaining my current obsession with Claude Opus 4.5, I recommend you read it if you haven’t had the chance:

A summary of this is that I pay for the best models and greatly value the marginal intelligence over speed — particularly because, for a lot of the tasks I do, I find that the models are just starting to be able to do them well. As these capabilities diffuse in 2026, speed will become more of a determining factor in model selection.

Peter Wildeford had a post on X with a nice graphic that reflected a very similar usage pattern:

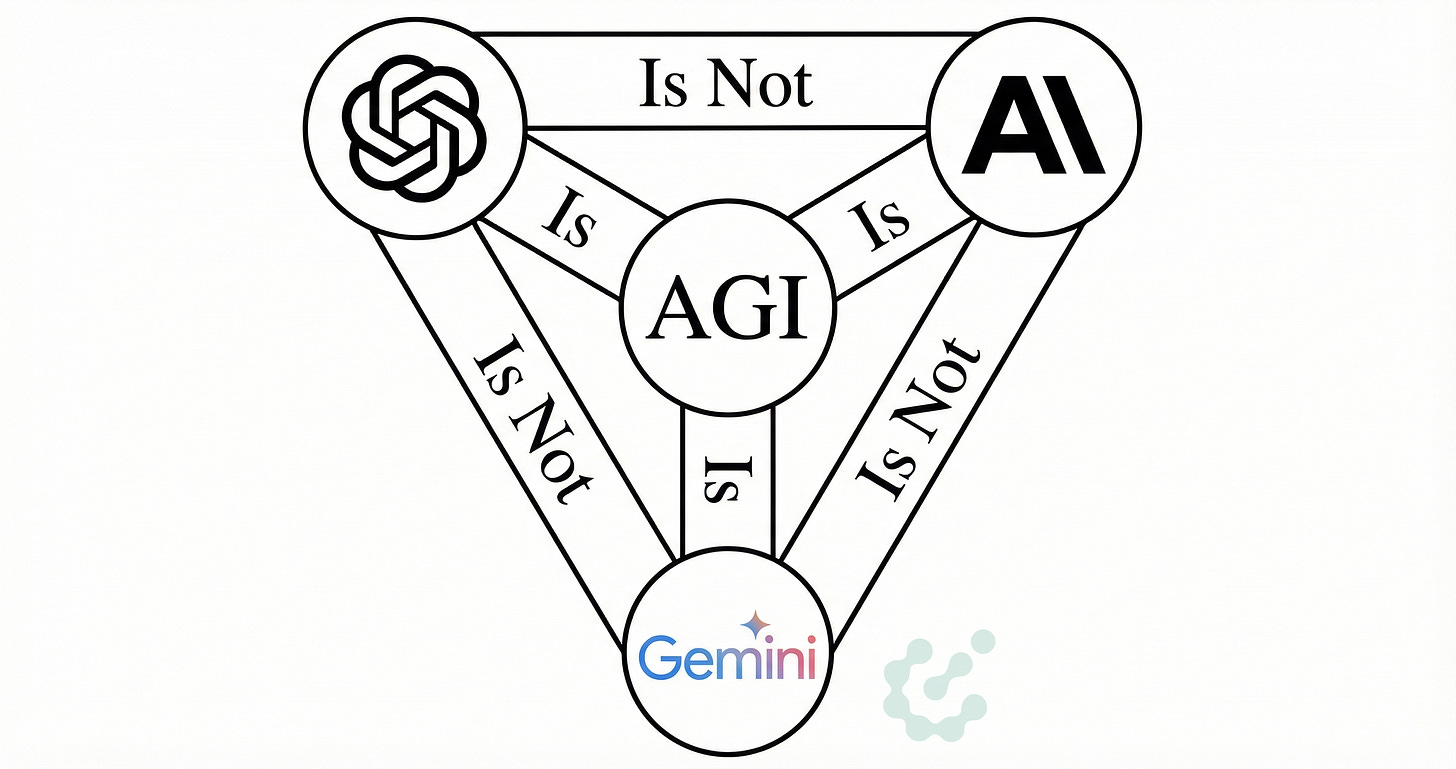

Across all of these categories, it doesn’t feel like I could get away with just using one of these models without taking a substantial haircut in capabilities. This is a very strong endorsement for the notion of AI being jagged — i.e. with very strong capabilities spread out unevenly — while also being a bit of an unusual way to need to use a product. Each model is jagged in its own way. Through 2023, 2024, and the earlier days of modern AI, it quite often felt like there was always just one winning model and keeping up was easier. Today, it takes a lot of work and fiddling to make sure you’re not missing out on capabilities.

The working pattern that I’ve formed that most reinforces this using multiple models era is how often my problem with an AI model is solved by passing the same query to a peer model. Models get stuck, some can’t find bugs, some coding agents keep getting stuck on some weird, suboptimal approach, and so on. In these cases, it feels quite common to boot up a peer model or agent and get it to unblock project.

This multi-model approach or agent-switching happening occasionally would be what I’d expect, but with it happening regularly it means that the models are actually all quite close to being able to solve the tasks I’m throwing at them — they’re just not quite there. The intuition here is that if we view each task as having a probability of success, if said the probability was low for each model, switching would almost always fail. For switching to regularly solve the task, each model must have a fairly high probability of success.

For the time being, it seems like tasks at the frontier of AI capabilities will always keep this model-switching meta, but it’s a moving suite of capabilities. The things I need to switch on now will soon be solved by all the next-generation of models.

I’m very happy with the value I’m getting out of my hundreds of dollars of AI subscriptions, and you should likely consider doing the same if you work in a domain that sounds similar to mine.

On the opposite side of the frontier models pushing to make current cutting edge tasks 100% reliable are open models pushing to undercut the price of frontier models. The coding plans on open models tend to cost 10X (or more) less than the frontier lab plans. It’s a boring take, but for the next few years I expect this gap to largely remain steady, where a lot of people get an insane value out of the cutting edge of models. It’ll take longer for the open model undercut to hit the frontier labs, even though from basic principles it looks like a precarious position for them to be in, in terms of costs of R&D and deployment. Open models haven’t been remotely close to Claude 4.5 Opus or GPT 5.2 Thinking in my use.

The other factor is that 2025 gave us all of Deep Research agents, code/CLI agents, search (and Pro) tool use models, and there will almost certainly be new form factors we end up using almost every day in released 2026. Historically, closed labs have been better at shipping new products into the world, but with better open models this should be more diffused, as good product capabilities are very diffuse across the tech ecosystem. To capitalize on this, you need to invest time (and money) trying all the cutting-edge AI tools you can get your hands on. Don’t be loyal to one provider.

i commented on your continual learning piece back in august about running multiple claude code instances and now, given the context of your post, i want to share what i've built since then, because i think it relates. disclaimer: i developed this for workflows i encounter frequently in my work, so it's shaped by that and basically evolves every day. to start: it's not about "fully switching" to another model when you're stuck, it's more about building a system where opus first establishes a context-aware intent, and then orchestrates calls to specialized proprietary tools or, to other model provider apis while maintaining full context itself, and each external call is clean and isolated and, in a curated way, adds to the given context of the state of a convo. if you've read about recursive language models, it's kind of similar to that but more hand-held. so one main thing i've learned: opus needs to know! it's the main expert, like the shot-caller. the external models can be called for (a) facts, then they just retrieve information or state what they see or (b) they are more like "independent consultants", operating in isolated contexts (see what i mean below), their opinions may or may not be relevant or useful, and opus (after i make sure it's intent-aligned) decides what to actually use.

so let me explain what i mean. my work is research, documentation and communications-heavy. like for everybody right now, claude opus via claude code is my main interface. opus is amazing at capturing signal (or intent), working agentically and coherently on longer running tasks, but it needs to know what to use to accomplish a given task, and it needs to be reminded to not one-shot, and work sequentially through tasks via calling external tools. this is, at latest after the ralph wiggum loop blow-up, common knowledge. so whenever opus needs something it can't do well (or at all), like a deep web research, transcribing a voice memo, analyzing a pdf visually, in my system, i have skills defined, which describe or call tools, such that opus then shells out to proprietary tools i built or external model apis. this is simple python wrappers for gpt and gemini that claude calls like any cli tool.

the key things therefore are intent-alignment (which people do via planning mode or spec-driven development), context-surfacing (curating claude.md, skill definitions, hooks), context-isolation (subagents, other model api calls) and calibration (mostly via a mix of skill definitions, claude.md). one thing i've learned about intent-alignment: at the start of a session, don't let opus give you a 500-word synthesis of the current state. align on intent fast, then bounce back and forth with shorter iterations. i call this "high signal" mode, information-dense, no fluff. this matters because when external model opinions come in, opus needs a strong anchor on what i actually want before it starts integrating those opinions.

each project starts with a signal—could be a voice memo, a meeting, a forwarded chat. i process it via skills (transcribe, search emails), then run discovery via subagents to find what i've already done on this topic. files accumulate as i work; each project folder gets a CLAUDE.md with curated context. when sessions run long, a handover skill creates state files for the next session. so before opus calls any external api, it already knows what's going on.

so on a basic level, this is how i call external tools or apis. the key is CLI arguments. when opus needs to call out, its internal state (it has listed relevant files, read some fully, some partially, gotten an index of potentially relevant files from subagents) lets it decide agentically which files are relevant for this isolated research task. for gpt it looks like: `--file notes.md --file state.md --file mail-chain.md --task "research X"`. the script stuffs these into the api call with xml markers so gpt knows there's a main task (the anchor) and context files (clearly named and hierarchized), returns the result in stdout, and claude reads it, decides what's actually useful given the original intent, and continues. the external model gets a clean isolated slice, it doesn't need conversation history because claude curated exactly what it needs.

the models have different strengths. gpt-5 always has web search, so i use it for anything needing current information—market research, fact-checking, finding docs. gemini is better for multimodal (pdfs, images, audio transcription). the wrappers have presets: for gpt it's reasoning effort (`light`/`balanced`/`deep`), for gemini it's model selection plus thinking-level. most queries use `light`—quick 1-minute lookups without even attaching context files.

a workflow i use constantly: voice memos while walking, transcribed via gemini, then project discovery spawns parallel subagents to map the workspace and find what i've already done. half the time it surfaces useful state from weeks ago that i'd forgotten. the system acts as external memory.

what i've been harnessing lately is hooks that log invocations of skills and subagents. i log every skill invocation to a jsonl file (timestamp, skill name, args, session id). a hook fires immediately after each skill that calls haiku (basically free via the claude agent sdk) to infer the purpose of that invocation from the conversation context. then at session end, another hook feeds the entire transcript to gemini 3 flash and asks it to assess whether each skill actually helped, what the user response was, whether the task progressed. the assessments get written back to the jsonl so i can query them later and improve the skills based on semantic patterns observed. after a few hundred sessions, heuristics accumulate. parallel searches with different scopes catch things single searches miss. the system builds patterns from its own usage data and i can make my skills and subagents better.

i think the interesting thing here is not the multi-model part per se but the architecture as a whole: opus as the main expert, external models as consultants that get clean isolated calls, and a feedback loop that tracks what actually works. intent-aligned opus decides what to use from the external opinions, sometimes everything, sometimes nothing. claude cowork will probably absorb some of this, but there's still a lot of value in building your own stack because the models are so jagged.

In a much recommended comment I wrote in last Friday's Financial Times to an article entitled: DeepSeek rival’s shares double in debut as Chinese AI companies rush to list (https://www.ft.com/content/a4fc6106-5a61-4a89-9400-c17c87fb1920#comments-anchor) I replied as follows:

You fundamentally misunderstand the emerging character of the Chinese LLM community. It is not so much competitive as 'co-opetitive'. Being Open Weight, they share architectural software improvements willingly whilst each individual LLM concentrates on a slightly different - yet complementary - area of expertise. What is emerging is a Dragon Swarm whose watchword is consilience. DeepSeek is the Architect Dragon whose Open‑Weight 'foundation model excellence' (rich in software design features willingly shared) will be massively reinforced when R2 drops mid February, not coincidentally coinciding with the advent of the Year of the Fire Horse. Deep Seek is the bedrock of the swarm - the 'Mother of Dragons' if you will. Aside from being the technical supremo, it is optimized for all-round reasoning and general intelligence. MiniMax is the Creative & Sonic Dragon, a specialist in multimodal creativity – text, voice, music and immersive content synthesis. Deep Seek and Minimax (and Qwen, Kimi, Ubiquant, 01.AI, ZiAI, Sensetime and more) are not so much rivals as members of a Dragon Swarm of Open Weight LLMs covering an extraordinarily wide range of expertises.